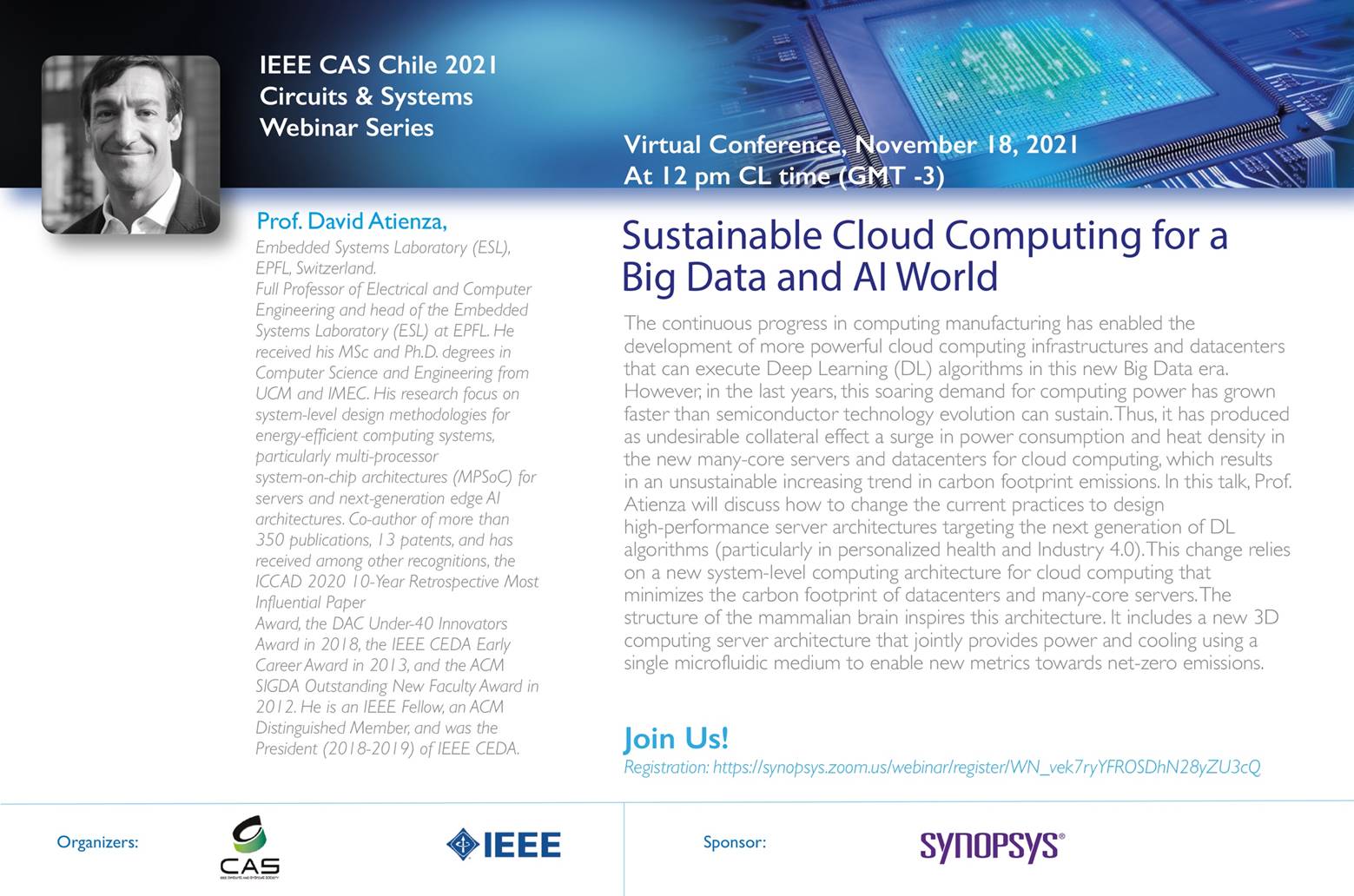

Sustainable Cloud Computing for a Big Data and AI World

The continuous progress in computing manufacturing has enabled the development of more powerful cloud computing infrastructures and datacenters that can execute Deep Learning (DL) algorithms in this new Big Data era. However, in the last years, this soaring demand for computing power has grown faster than semiconductor technology evolution can sustain. Thus, it has produced as undesirable collateral effect a surge in power consumption and heat density in the new many-core servers and datacenters for cloud computing, which results in an unsustainable increasing trend in carbon footprint emissions.

In this talk, Prof. Atienza discussed how to change the current practices to design high-performance server architectures targeting the next generation of DL algorithms (particularly in personalized health and Industry 4.0). This change relies on a new system-level computing architecture for cloud computing that minimizes the carbon footprint of datacenters and many-core servers. The structure of the mammalian brain inspires this architecture. It includes a new 3D computing server architecture that jointly provides power and cooling using a single microfluidic medium to enable new metrics towards net-zero emissions. Also, this system-level architecture includes the positive impact of new memory technologies for efficient computing on many-core servers. Finally, this architecture champions a new system-level run-time thermal-energy management approach based on machine learning to dynamically control the energy spent by the system according to the status of the electrical grid infrastructure.